AI Agents Are Talking, Are You Listening?

If you ask most security teams who has access to their customer data, they can usually give you a clear answer. They can point to OAuth scopes, user permissions, API keys, and audit logs to back it up. However, if you ask which AI agents are exchanging that same data across tools like Salesforce, Slack, Google Drive, and Microsoft Teams, the answer is far less clear.

These agent-to-agent trust relationships form when a chain executes and disappear when it completes. Individual API calls may leave traces in platform logs, but those traces are fragmented across systems and don’t capture the composite trust chain or the full scope of what was shared between agents.

With Gartner predicting that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025, these implicit trust chains are growing faster than security teams can map them. The agents are already talking to each other, and most organizations have no way to see what data is being shared or what permissions are being used to share it.

The Trust Model Nobody Designed or Approved

In traditional SaaS integrations, trust is explicit. An OAuth consent screen tells you which application is requesting access, what scopes it needs, and which user authorized it. If something goes wrong, security teams can inspect the token and revoke it.

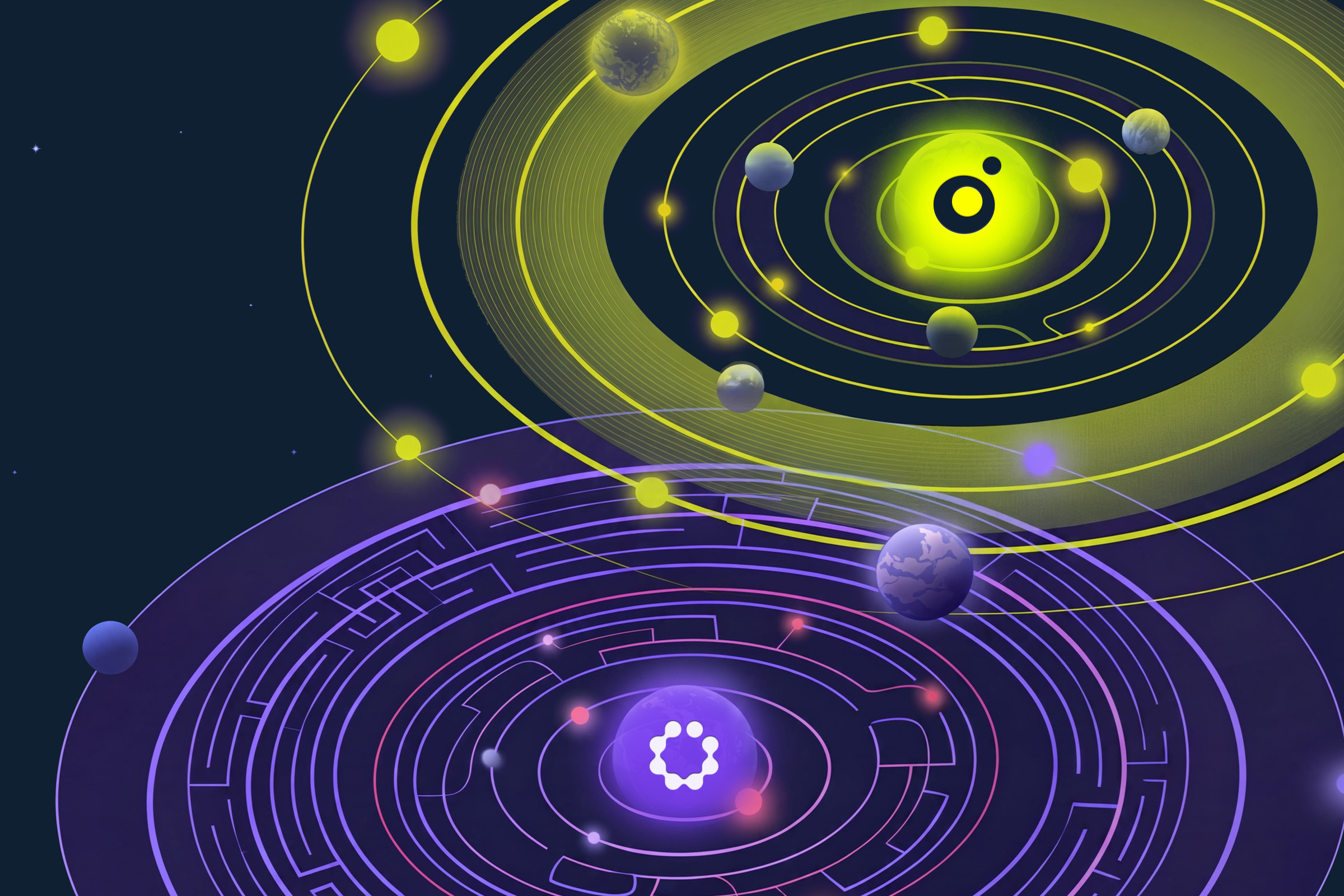

Agent-to-agent interactions work differently. When one AI agent calls another through a tool-use pattern or an MCP server connection, the trust relationship exists only at runtime, forming when the chain executes and disappearing when it completes. There’s no consent screen, no persistent token, and no log entry that captures what was shared between agents. Your CASB monitors traffic between users and cloud services, your IdP manages authentication for human identities, your SIEM correlates events with well-defined log schemas.

None of these were built to observe runtime interactions between autonomous AI agents operating across multiple SaaS platforms, which means the fastest-growing category of trust relationships in your environment is also the one with the least visibility.

What Happens When One Agent Is Compromised

That lack of visibility becomes a compound problem when one agent in a chain is compromised. Whether through prompt injection, a poisoned tool-use response, or a compromised MCP server, the blast radius extends to every agent the compromised one interacts with. The attacker doesn’t need to escalate privileges to move laterally. The compromised agent simply continues passing context, calling tools, and handing off to the next agent in the chain, except the context it passes is now attacker-controlled.

What makes this different from traditional lateral movement is that it generates no detectable anomaly. A human attacker triggers signals like unusual login times, abnormal access volumes, and geographic impossibilities. An AI agent operating within its normal behavioral parameters produces none of these, because agents access data at scale and interact with multiple systems in quick succession as part of their normal function.

The Questions That Matter Right Now

Addressing this requires a layer of visibility that most organizations haven’t built yet: agent interaction graphs that map which agents communicate with each other, what data flows between them, and what the composite scope of each chain looks like. These questions can help security teams assess where the gaps are.

Do We Know What Agents We Have?

Static inventories and periodic audits fall short because agent deployments change continuously. Business teams are deploying agents without waiting for security review, just as they did with shadow SaaS before it, and discovery must be continuous, covering sanctioned and unsanctioned agents alike.

Can We See What They Connect To?

Knowing that an agent exists isn’t the same as knowing what it connects to at runtime. Security teams need to see the full interaction graph: the relationships between agents, the data flowing between them, and the tools each one invokes. Without this map, you can’t scope the blast radius of a compromised agent or identify chains that create unintended data exposure paths.

Are We Evaluating Chain-Level Permissions?

Reviewing permissions per-application or per-user doesn’t work when the effective scope is determined by the composition of an agent chain, also known as toxic combinations. An agent with read-only access to customer data looks low-risk on its own, but connected to a chain that ends in a public-facing channel, it becomes a data exposure vector.

Can We See What Tools Agents Are Calling?

Security teams need to see not just which agents are active, but what tools they are calling, what data they are passing, and whether any chain exceeds a defined scope boundary. This requires instrumentation at the agent orchestration layer, something most organizations haven’t yet implemented.

Can We Kill an Agent Chain in Real Time?

If a chain begins accessing data or invoking tools outside its approved parameters, security teams need the ability to kill it immediately. This is analogous to session revocation for human users, but applied to multi-agent workflows that span multiple autonomous systems.

Getting Ahead of This Problem as an Industry

If your organization answered “no” to most of these questions, you’re not alone. Most security programs were built for human identities and explicit trust relationships, not for implicit trust chains between autonomous agents, and closing the gap means treating agent interaction security as its own discipline.

The MAESTRO framework provides a structured starting point for threat modeling in multi-agent environments, offering a layered approach to identifying risks across the agentic architecture. The foundational requirement it points to is the same one this entire blog post comes back to: you can’t secure agent chains you can’t see.

AI agents are already talking to each other across SaaS environments, and the organizations that build visibility into those interactions now, enforcing controls at the chain level, will be the ones best positioned to adopt agentic AI at scale.

Gal Nakash

ABOUT THE AUTHOR

Gal is the Cofounder & CPO of Reco. Gal is a former Lieutenant Colonel in the Israeli Prime Minister's Office. He is a tech enthusiast, with a background of Security Researcher and Hacker. Gal has led teams in multiple cybersecurity areas with an expertise in the human element.

Gal is the Cofounder & CPO of Reco. Gal is a former Lieutenant Colonel in the Israeli Prime Minister's Office. He is a tech enthusiast, with a background of Security Researcher and Hacker. Gal has led teams in multiple cybersecurity areas with an expertise in the human element.

%201.svg)

-p-2000.png)

%201.svg)

.svg)